New tools mean new rules—and the shakeup is just beginning

In the workplace, any new form of technology shuffles the deck of knowledge, productivity and—ultimately—power. Artificial Intelligence (AI) is no exception. But who is currently putting AI to use—and who is profiting?

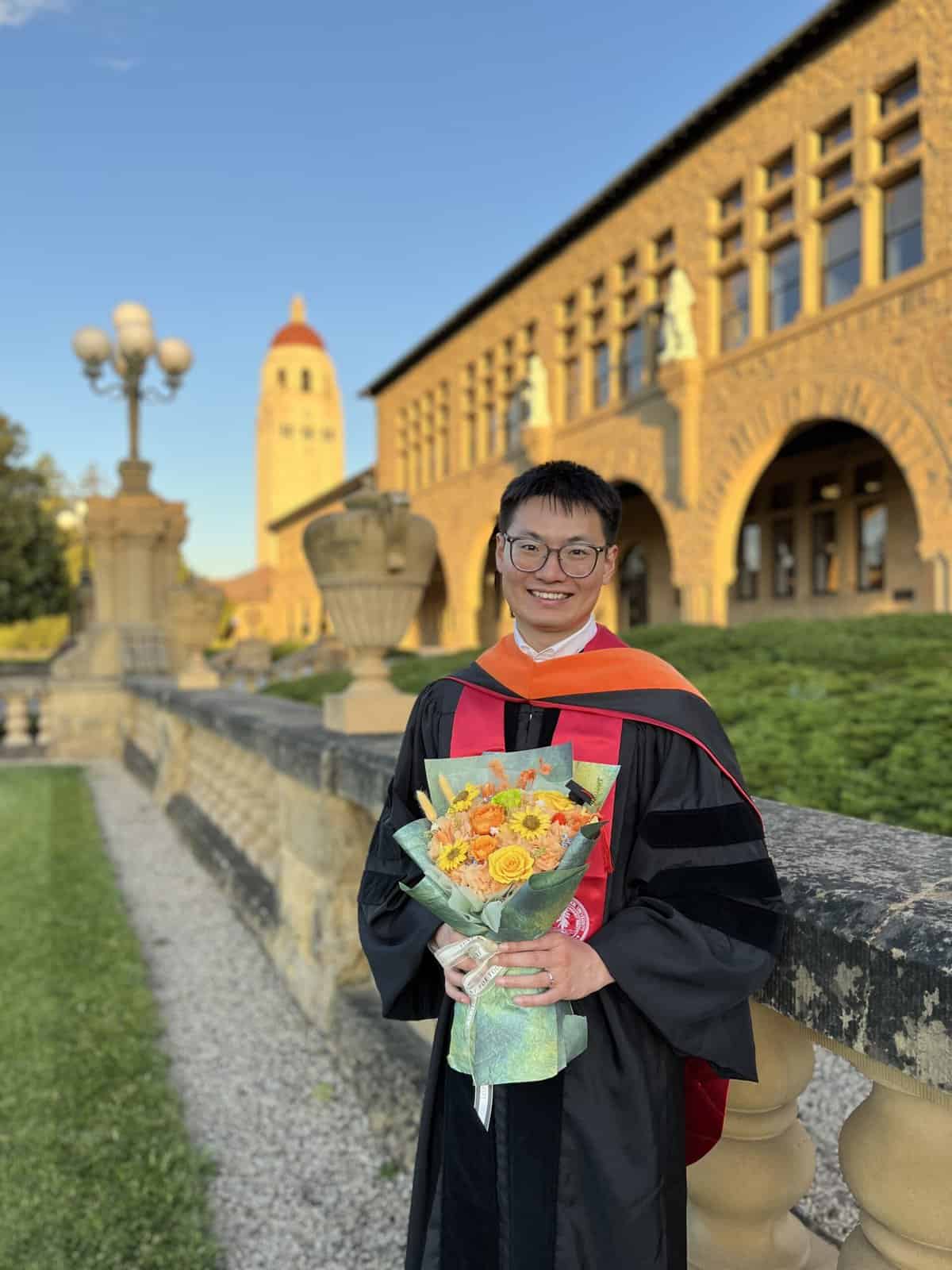

Enter Hancheng Cao.

Hancheng Cao joined Goizueta in 2025 as an assistant professor of information systems and operations management, and holds a courtesy assistant professor appointment at Emory College of Arts and Sciences Department of Computer Science. He has long nurtured an interest in the dynamic dialogue between humans and technology, exploring the ways that details like design and interface influence our relationship with new tools. Before joining Emory University, he worked at Microsoft’s Office of Applied Research as a postdoctoral researcher, and he’s also done research at the Allen Institute for AI and collaborated with organizations like Asana and various startups. Coming to the business sector with a background in electrical engineering and a computer science PhD from Stanford, he has unique intel into the intersection of AI, people, and work environments.

In the aftermath of the AI boom, part of Hancheng’s job is to figure out what kind of hand stakeholders have inherited, and how this will change the nature of the game. A recent paper published with colleagues unpacks the uneven ways in which individuals and companies are employing AI in business writing and what implications that has for the future.

“AI does not just make individuals more productive,” says Hancheng. “It reorganizes how work is coordinated, who makes decisions, and where authority sits. If we treat AI as a personal tool instead of an organizational actor, we may miss its most profound consequences.”

His work grapples with big questions. What is the line of demarcation between human and AI generated work? Do companies have an obligation to disclose when they’re using AI to communicate with customers? Will AI be a profound equalizer—or it will it exacerbate power disparities that already exist?

Hancheng reminds business leaders that they shouldn’t treat AI adoption as the finish line. What matters is whether it actually improves outcomes, protects trust, and helps a brand stand out—otherwise it can erode value without anyone noticing.

Describe your research field in six words.

“How AI reshapes organizations and work.”

If you had to give readers one headline number from the paper, what would it be and why?

By late 2024, about 24% of corporate press releases show LLM assisted writing. It is a clear indicator that generative AI is already embedded in core business communication, not a niche behavior.

Do you feel you’ve developed a knack for detecting AI in text? Are there certain “tells” you look for?

At the individual text level, it is getting harder to be confident, especially when people lightly edit.

You find a “diffusion curve”: a short lag after ChatGPT’s release, then a rapid rise, then stabilization by late 2023. Why that shape?

The pattern fits a classic adoption curve: a short lag while people learn the tool and workflows adjust, then a rapid ramp as it becomes easy to use and widely available, then a plateau as early adopters saturate and frictions slow broader uptake. The paper points to frictions that could slow the next phase: institutional inertia, technological limits, and audience backlash when AI text feels less authentic.

Your team makes it clear that AI use in business writing is already ubiquitous. What should business leaders conclude from that—panic, adapt, or something else?

Adapt, thoughtfully. The numbers suggest this is already mainstream, so the question is not whether AI will be used, but how to use it responsibly while protecting credibility and clarity. The paper highlights both efficiency gains and risks to authenticity and trust in public facing messages.

Do you worry about “same-ness”—companies’ communications starting to sound alike? What risks does that create for brand differentiation? What kind of writing is likely to become more valuable now that AI is so accessible?

Yes. The paper flags concerns about uniformity and homogenization as AI writing spreads. That creates brand risk: if everyone sounds the same, differentiation weakens and trust can erode.

“Writing that becomes more valuable is high signal writing: clearly owned by accountable humans with unique characteristics.”

From what you’ve gleaned so far, do you think the trajectory of AI is to become a “great equalizer” or do you think it is exacerbating disparities of power?

Both forces are plausible, but the paper provides suggestive evidence for some equalizing effects in certain contexts. For example, areas with lower educational attainment show modestly higher LLM adoption in consumer complaints, consistent with the idea that AI can help people express themselves effectively. At the same time, unequal access, organizational power, and credibility dynamics can still widen gaps, so outcomes likely depend on context.

In job postings, you find younger/smaller firms use LLMs more. If newer firms are using AI to scale communications and hiring faster, does this change competitive dynamics, especially for marketing and recruiting?

It can. If newer firms can scale communications faster, they can compete more aggressively for attention and talent, even with fewer people. But it can also compress differentiation if postings become more generic and interchangeable.

You cite evidence that AI-written job posts can generate more applications but fewer hires. What does that suggest about the quality versus quantity tradeoff when AI drafts business text?

AI drafted text may broaden reach and increase volume, but it can reduce precision, signaling, or fit, making it harder to convert applicants into hires.

Do companies have an obligation to disclose AI assistance? What about individuals?

In high stakes public communication, disclosure is worth serious consideration because trust and accountability are core. A practical approach is to disclose when AI materially shaped content, especially for claims, policies, or sensitive topics, while avoiding performative labeling for trivial edits. For individuals, expectations should be context dependent: higher standards where the audience relies on authenticity or expertise, lower standards for routine assistance.

If you were advising a CEO or communications leader, what are 2–3 guardrails you’d implement this year for AI-assisted writing?

1. Human accountable owner for every external message, with a clear sign off process

2. Fact checking and source verification for any claims, numbers, or quotes, especially in regulated or investor facing contexts

3. Style and substance rules that preserve brand voice and specificity, including requiring concrete details and banning vague filler

What do you see as the biggest limitation of your research paper?

The core limitation is detectability: the method cannot reliably capture text that was heavily edited by humans or produced by models that closely mimic human writing, so the estimates should be interpreted as a lower bound of adoption.

What’s the clearest risk to public trust if AI-assisted writing becomes the default?

An authenticity collapse: audiences stop believing that institutional messages reflect real intent, care, or accountability. That can weaken trust even when the content is accurate.

What’s the next research question you most want answered—and why should business readers care?

What is the causal impact of LLM assisted writing on outcomes: complaint resolution, press release credibility, investor response, applicant quality, and hiring success. Business readers should care because adoption alone is not the win; performance, trust, and differentiation determine whether AI writing creates value or quietly destroys it.

Goizueta faculty apply their expertise and knowledge to solving problems that society—and the world—face. Learn more about faculty research at Goizueta.